Patent prosecutors working in the AI and software space often believe they are following best practices when they prepare specifications built around system diagrams, high-level architecture, and flowcharts that track the claimed functionality. The figures walk through the data flow. The detailed description mirrors the figures. The claims align with those same steps. From a stylistic standpoint, the application appears clean, modern, and technically credible. Yet recent decisions continue to demonstrate that this approach can create a written description failure that is not correctable after filing.

The written description requirement is not primarily concerned with whether a person of ordinary skill could implement the invention after reading the claims. It instead asks whether the specification demonstrates that the inventor actually possessed the claimed subject matter at the time of filing. As the Board explained, the purpose of the requirement is to ensure that the scope of the right to exclude, as set forth in the claims, does not overreach the scope of the inventor’s contribution to the field of art as described in the patent specification. The inquiry is therefore evidentiary, not merely technical. A disclosure that looks enabling in a general sense can still fail to show possession of the particular implementation being claimed.

This distinction becomes acute in AI-related inventions because of how the courts, and the USPTO, have implemented this requirement, particularly where applicants describe results-oriented training processes while omitting the mechanics of how those results are achieved. One must remember that a description that merely renders the invention obvious does not satisfy the requirement. In other words, describing what a neural network is expected to accomplish, even if that outcome would be routine for a skilled practitioner, does not demonstrate that the inventors had actually developed and possessed the specific solution being claimed.

A recent appeal (Appeal 2025-002921, Application 17/352,732) provides a useful illustration of how what many would consider, from a surface level review, a well-drafted AI disclosure can nevertheless fail under § 112(a). Claim 1 on appeal is reproduced below, with the italicized element at issue:

1. A method for analyzing signals, comprising:

receiving first cardiac signal segments responsively to electrical activity sensed by a first sensing electrode in contact with tissue of a first living subject;

injecting the received first cardiac signal segments into a recording apparatus cable, which extends to a recording apparatus, the cable outputting corresponding noise-added cardiac signal segments responsively to electrical noise acquired in the cable, wherein the injecting includes injecting the received first cardiac signal segments into a first end of the recording

apparatus cable;

electrically connecting a first end of a shielded cable to a second end of the recording apparatus cable, wherein a second end of the shielded cable outputs the noise-added cardiac signal segments;

training an artificial neural network to at least partially compensate for electrical noise that will be added to cardiac signals in the cable responsively to the received first cardiac signal segments and the corresponding noise-added cardiac signal segments, wherein the training is performed responsively to the received first cardiac signal segments and the

corresponding noise-added cardiac signal segments output by the second end of the shielded cable;

receiving a second cardiac signal responsively to electrical activity sensed by a second sensing electrode in contact with tissue of a second living subject;

applying the trained artificial neural network to the second cardiac signal yielding the second cardiac signal with noise-compensation, which at least partially compensates for the electrical noise, which is not yet in the second cardiac signal but will be added to the second cardiac signal in the cable; and

outputting the second cardiac signal with the noise- compensation to the recording apparatus via the cable.

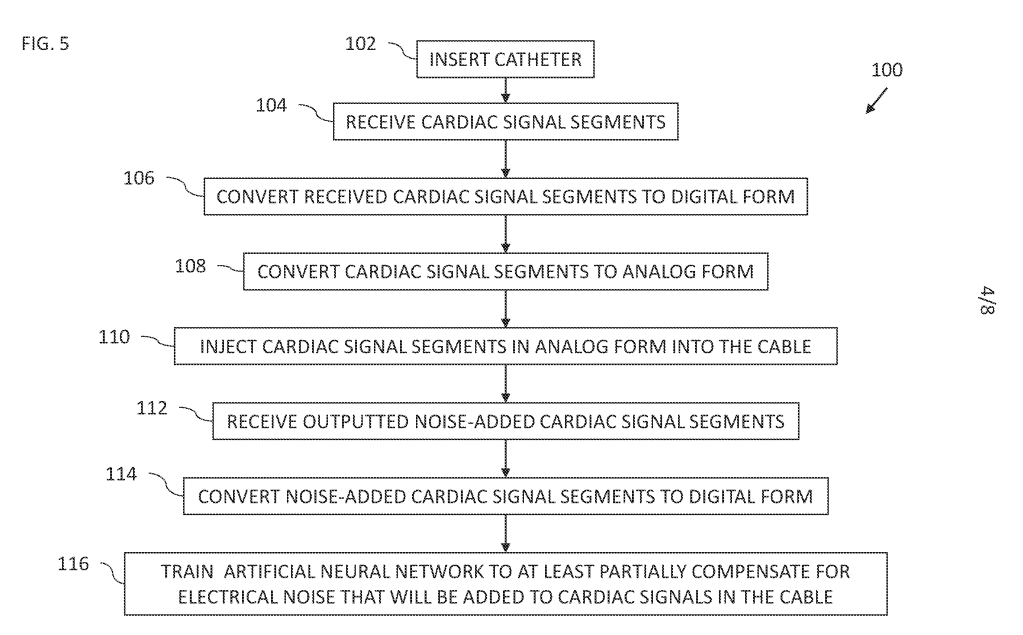

The specification included system figures, signal-processing context, and multiple flowcharts describing acquisition of cardiac signals, introduction of noise, and training of an artificial neural network to compensate for that noise. The applicant relied heavily on those figures and accompanying prose as evidence that the training step was adequately described, arguing that the disclosure illustrate[d] in flow chart form and describe[d] in corresponding prose detailed embodiments of the claimed training.

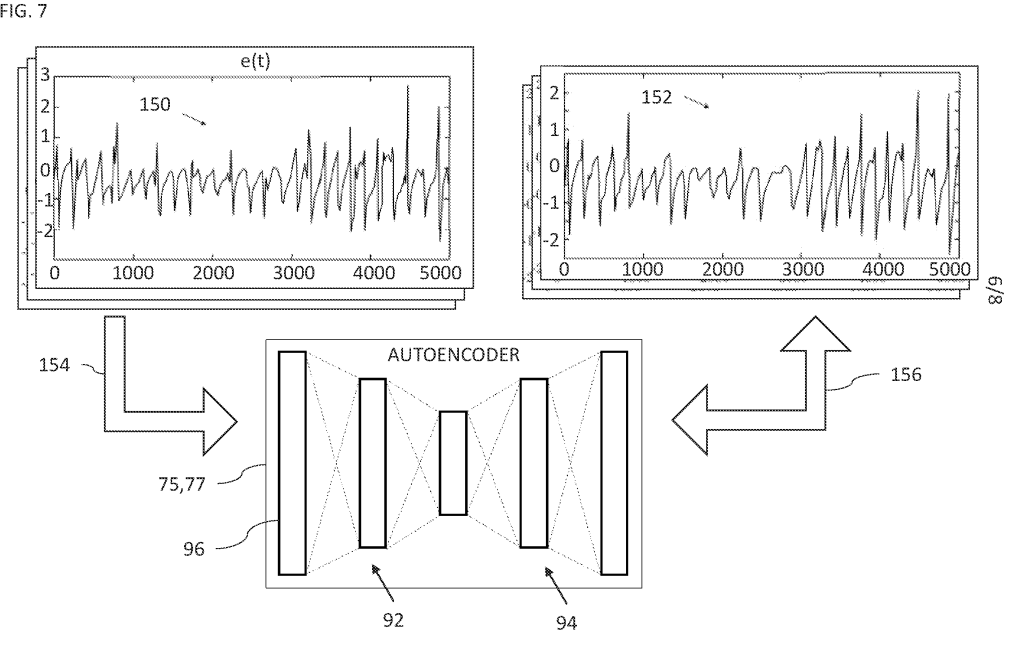

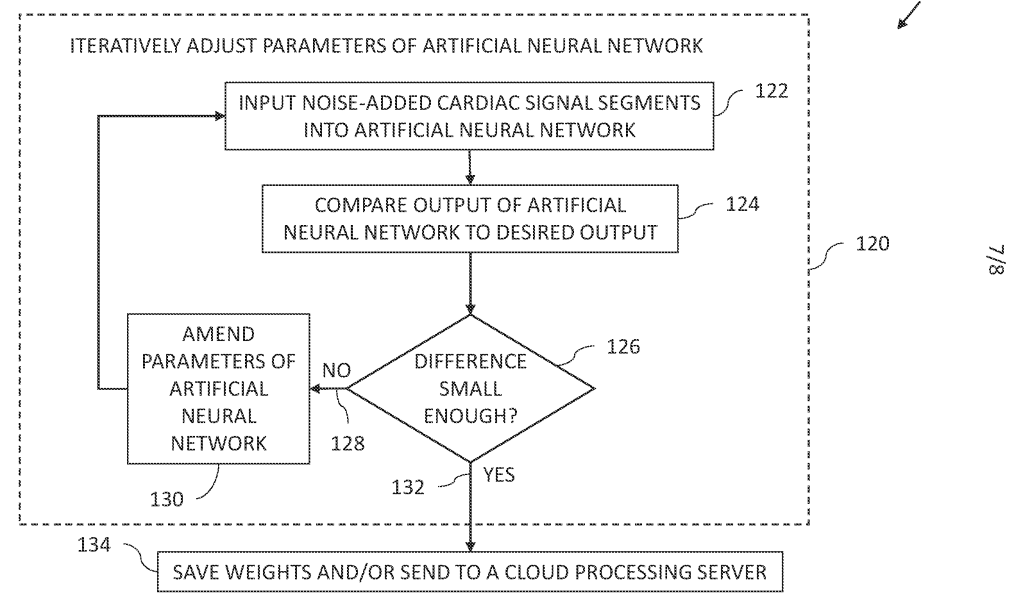

The Board found the disclosure lacking because the disclosure never moved beyond functional description into implementation detail. A central problem identified by the Examiner and affirmed on appeal was that the specification described the existence of training but not how the training was actually performed. The decision noted that the specification provides insufficient detail to the algorithm used in the artificial neural network. Although the figures depicted signal comparisons and iterative adjustment, they did not explain what was being calculated, what features were evaluated, or how parameter updates were determined.

The Board found that describing a comparison between noisy and non-noisy signals was not enough because the disclosure fails to explain how the noisy signal and the normal cardiac signal are compared and what is used in the comparison to figure out the noise added. Likewise, references to adjusting parameters did not establish possession of the claimed solution where the specification did not explain what these weight adjustments of the parameters would be changing, which specific weight parameters are being changed, and what exactly are these weights/parameters directed to.

Notably, the application did mention known machine-learning components, including an encoder/decoder structure and even the use of an optimizer. But naming familiar tools did not save the disclosure. The Board characterized the discussion as describing a neural network “at a high level of generality” and concluded that it fails to provide detail as to the algorithm used in training the neural network as recited in claim 1. The specification, in the Board’s view, amounted to little more than a restatement of the claim language in narrative form, which is precisely what written description jurisprudence warns against.

The decision also relied on examination guidance reflected in MPEP § 2161.01(I), which instructs that for computer-implemented functional claims the specification must disclose the computer and the algorithm performing the function in sufficient detail to demonstrate possession. When that detail is missing, a rejection under 35 U.S.C. 112(a) for lack of written description must be made. The Board applied that principle directly, emphasizing that flowcharts and functional language are not substitutes for algorithmic disclosure.

One of the more instructive aspects of the decision is what the Board identified as missing. The specification did not define what constituted a sufficiently small error, what signal characteristics were evaluated, or what feature extraction or comparison methodology was used. The Board observed that the disclosure did not explain whether the relevant difference related to “peak values, SNR, zero crossings, or any other feature extraction technique.” Nor did it explain how the computed information translated into an actual compensation signal. These are precisely the types of details that inventors often dismiss as routine implementation, yet they are what demonstrate possession.

This creates a recurring tension during drafting. Inventors frequently resist inclusion of such material because they view it as obvious, not inventive, or outside the “core” of the invention. Internal drafting norms may reinforce that instinct by encouraging specifications that track claim language and avoid unnecessary specificity. But in the AI context, omitting those implementation details can convert a seemingly strong application into one that never sufficiently describes the invention being claimed. The result is not merely a rejection, but a disclosure gap that cannot be cured without adding new matter.

For prosecutors responding to similar written description rejections, it is critical to focus arguments on where the specification conveys possession of a specific implementation rather than only relying on the general knowledge of a skilled artisan or the ubiquity of machine-learning techniques. Pointing to flowcharts or asserting that training methods are well known may not succeed if the application does not tie those methods to the claimed objective in a concrete way. Effective responses instead identify passages that describe defined inputs, transformations, evaluation criteria, parameter updates, or model structures linked to the claimed functionality, and explain how those disclosures evidence a particular solution rather than an aspirational result.

For those drafting new AI-based applications, one lesson is to include at least one technically coherent example of how the model is actually trained and used in the context of the invention. That example should describe what data is used, how it is processed, what features are evaluated, what loss or comparison is performed, how parameters are updated, and/or how the resulting model generates the claimed output. The goal is not to claim the machine-learning technique itself, but to demonstrate that the inventors possessed a concrete way of achieving the claimed result. Including this level of detail does not narrow the claims when properly framed, but it does provide the evidentiary support needed to survive § 112(a).

In AI patent drafting, the paradox is that details everyone assumes are unnecessary are often the very details needed to prove possession. The specification must teach more than the idea of training a model; it must establish that the disclosure actually shows how.

Leave a comment